Db2 for z/OS initial setup for CDC

Db2 for z/OS system requirements

Performing CDC from Db2 for z/OS with Striim requires a Linux server to host the Db2 Connect and Striim Agent for Db2 for z/OS and, depending on your environment, possibly also PostgreSQL and Confluent. Kafka and curl must be installed.

Db2 for z/OS setup overview

The following are prerequisites for setting up CDC from Db2 for z/OS:

Purchase a Striim Db2 for z/OS Support license. Striim will then procure you a Striim Agent for Db2 for z/OS license.

Install the stored procedures provided by Striim in Db2 for z/OS.

Transfer the program binaries (MVSLOAD.BIN) to the Mainframe server and perform a TSO Receive Operation.

APF authorise the received Load library. ( This task may need to be executed with z/OS System Programmer or z/OS System Administrator personnel.)

Define the Load library to the Db2 Application environment.

Define the DB2 User defined function.

Get Db2 Connect (see Db2 Connect overview) from IBM and install the IBM ODBC driver on the Linux host where you will install Striim Agent for Db2 for z/OS. Alternatively, if you already have DRDA in your Db2 for z/OS environment, you may use that.

Have an Oracle or PostgreSQL repository for use by Striim Agent for Db2 for z/OS.

Install the ODBC driver for the Oracle or PostgreSQL repository on the Linux host where you will install Striim Agent for Db2 for z/OS.

Provision Kafka to receive data from the Striim Agent for Db2 for z/OS. See Enabling external Kafka access.

Enable the Karapace schema registry. See Enabling Schema Registry.

In Db2 for z/OS, if any tables to be captured do not have primary keys, add them. (Striim Agent for Db2 for z/OS cannot capture change data from tables without primary keys.)

In Db2 for z/OS, enable data capture flags.

Create and deploy a Striim application including the Kafka-persisted stream to which Striim Connect will write the data captured by Striim Agent for Db2 for z/OS. The persisted stream details must be specified in the configuration file in the next step.

Populate the

RDRS_DB2zOS.propertiesconfiguration file. This is required by the setup script in the next step.Run the script to Install, configure, and start Striim Agent for Db2 for z/OS.

Allow-list the IP address of the machine running Striim Agent for Db2 for z/OS in the Striim Cloud console.

Download and install the mTLS certificates required for secure communication between Striim Agent for Db2 for z/OS and the Striim Cloud Kafka cluster.

1. Purchase Striim Db2 for z/OS Support license

Contact Striim Sales to purchase Db2 for z/OS Support.

2. Install the stored procedures in Db2

This part of the setup requires expertise in IBM z/OS mainframe administration. It involves loading binary file(s) onto the mainframe and implementing (installing) the binary file(s) as datasets and load libraries on the mainframe. The load library contains JCL (Job Control Language) scripts, which will be called and used via the Db2 stored procedure. This is the stored procedure that will be added onto the Db2 database once the load library has been properly loaded onto the mainframe. Our recommendation is to have your mainframe administrator handle this setup. Alternatively, you may engage Striim professional services.

Contact Striim Support for detailed instructions for performing this step, which requires a z/OS terminal emulator.

After completing the mainframe setup, load the stored procedure by running the following command in the SQL command executor of your choice, replacing <environment name> with the name of your WLM application environment (for example, WLM ENVIRONMENT DBDGENVG.

CREATE FUNCTION TCVUDT_V7 (VARBINARY(300),VARBINARY(32000)) RETURNS TABLE (OUTVALUE BLOB(200K)) PARAMETER CCSID EBCDIC EXTERNAL NAME TCSD2UDT LANGUAGE ASSEMBLE PROGRAM TYPE SUB STAY RESIDENT YES CONTINUE AFTER FAILURE WLM ENVIRONMENT <environment name> RUN OPTIONS 'H(,,ANY),STAC(,,ANY,),STO(,,,4K),BE(4K,,),LIBS(4K,,),ALL31(ON)' PARAMETER STYLE SQL NO SQL NO EXTERNAL ACTION FENCED SCRATCHPAD FINAL CALL DISALLOW PARALLEL;

3. Get and install Db2 Connect

Contact IBM to purchase Db2 Connect (see Db2 Connect overview). This includes support for secure SSL / TLS connections with Striim. IBM offers several distributions, including the full Client, Runtime Client, and the ODBC and CLI package. We recommend using the IBM Data Server Driver for ODBC and CLI version 11.5.9 (or a later version of 11.5, if available), as it is the most lightweight option and requires the least installation effort. You may download it from DB2 ODBC CLI driver Download and Installation information.

Perform the following steps on the Linux host where Striim Agent for Db2 for z/OS is installed.

Create a directory for the installation of the IBM Data Server Driver for ODBC and CLI software.

mkdir $HOME/db2_cli_odbc_driver

Copy the IBM Data Server Driver for ODBC and CLI software (vxx_xx_odbc_cli.tar.gz) into the above directory.

cp vxx_xx_odbc_cli.tar.gz $HOME/db2_cli_odbc_driver

Extract the IBM Data Server Driver for ODBC and CLI.

tar -xvf vxx_xx_odbc_cli.tar

Export the following environment variables.

export DB2_CLI_DRIVER_INSTALL_PATH=$HOME/db2_cli_odbc_driver/odbc_cli/clidriver export LD_LIBRARY_PATH=$HOME/db2_cli_odbc_driver/odbc_cli/clidriver/lib export LIBPATH=$HOME/db2_cli_odbc_driver/odbc_cli/clidriver/lib export PATH=$HOME/db2_cli_odbc_driver/odbc_cli/clidriver/bin:$PATH export PATH=$HOME/db2_cli_odbc_driver/odbc_cli/clidriver/adm:$PATH

To connect to the Db2 for z/OS server, download the license file db2consv_xx.lic and copy it to the license folder.

$HOME/db2_cli_odbc_driver/odbc_cli/clidriver/license

To configure the driver with a new connection:

Add the database.

db2cli writecfg add -database <database name> -host <IP address> -port <port>

Add the data source name (dsn).

db2cli writecfg add -dsn db2z13 -database <database name> -host <IP address> -port <port>

You can then test the connection with the following command.

db2cli validate -dsn db2z13 -connect -user <user name> -passwd <password>

4. Select the Oracle or PostgreSQL instance to host the Striim Agent for Db2 for z/OS repository

Striim Agent for Db2 for z/OS requires an Oracle or PostgreSQL instance to host its repository. If you do not have an Oracle or PostgreSQL instance available to host the Striim Agent for Db2 for z/OS repository, we recommend installing PostgreSQL (a free open-source DBMS). You may install it on the same Linux server you use to host Striim Agent for Db2 for z/OS.

Also install the ODBC driver for the repository database in the Striim Agent for Db2 for z/OS host. For Oracle, see DB2 ODBC CLI driver Download and Installation information. For PostgreSQL, use your Linux environment's package manager, such as yum or apt-get.

5. Install the ODBC driver for the repository

Install the ODBC driver for the Oracle or PostgreSQL repository on the Linux host where you will install Striim Agent for Db2 for z/OS.

6. Provision Kafka to receive data from the Striim Agent for Db2 for z/OS

Enable external Kafka access for publishing data so that Striim Agent for Db2 for z/OS can publish CDC events to the Striim Cloud Kafka cluster.

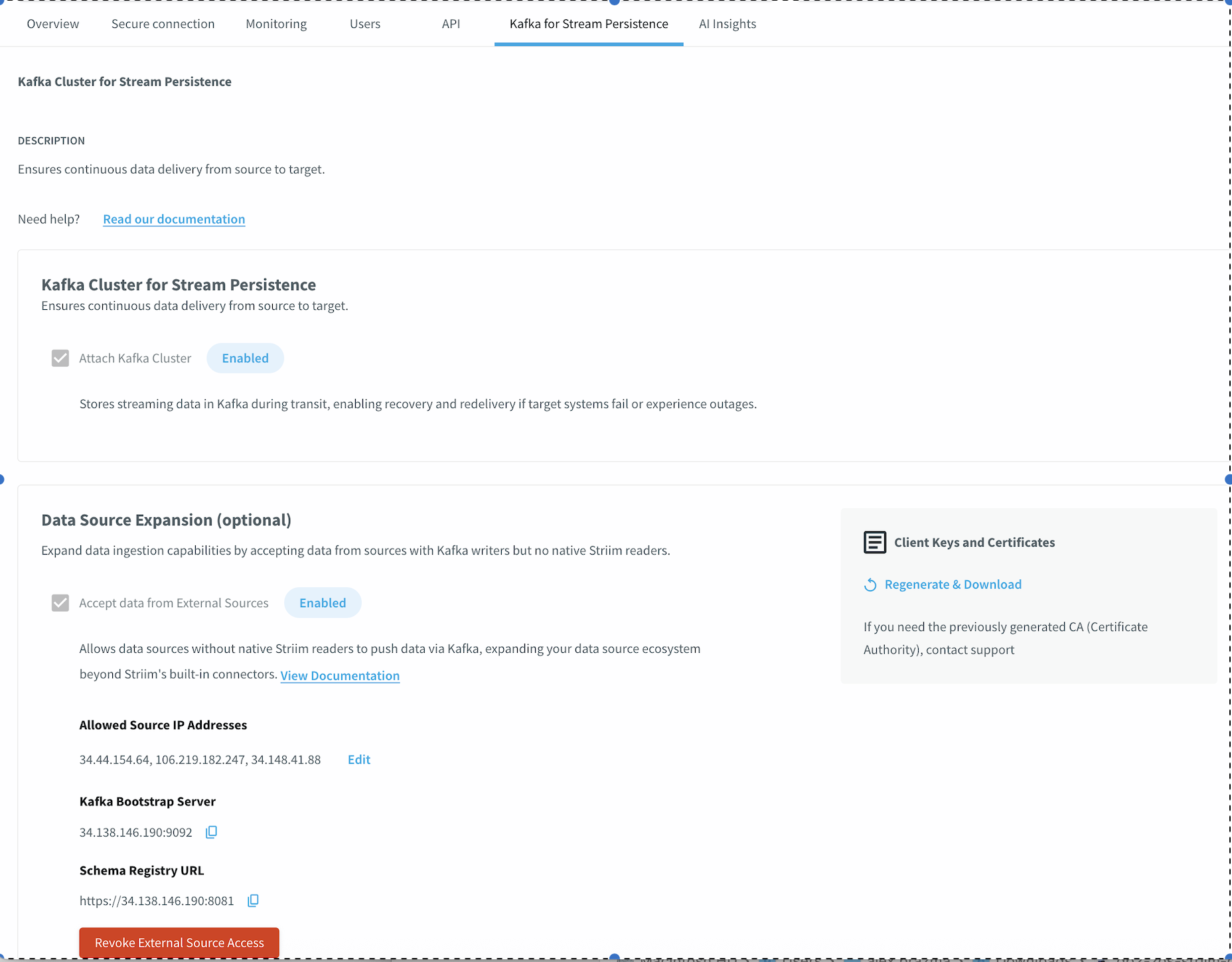

In the Striim Cloud console, go to the Kafka for Stream Persistence tab.

Under Kafka Cluster for Stream Persistence, select Attach Kafka Cluster.

Under Data Source Expansion, select Accept data from External Sources.

In the Allowed Source IP Addresses field, enter the IP address of the machine where Striim Agent for Db2 for z/OS is running. To specify multiple IP addresses, separate them with commas.

Click Attach.

After the Kafka cluster is attached, the console displays the Kafka Bootstrap Server address and Schema Registry URL. Record these values for use in the Striim Agent for Db2 for z/OS configuration file.

7. Enable the Karapace schema registry

The Karapace schema registry is automatically provisioned when you enable external Kafka access in the previous step. The Schema Registry URL is displayed on the Kafka for Stream Persistence tab after attachment. Use this URL when configuring the SCHEMA_REGISTRY property in the Striim Agent for Db2 for z/OS configuration file and the schemaRegistry property in the Striim application TQL.

8. Add primary keys

In Db2, if any tables to be captured do not have primary keys, add them. (Striim Agent for Db2 for z/OS cannot capture change data from tables without primary keys.) Note that the VARBINARY type is supported in composite primary keys only if it is the last column in the key.

9. Enable capture flags

In Db2, enable capture flags by executing the following command for each table to be captured:

ALTER TABLE <schema>.<table name> DATA CAPTURE CHANGES

10. Create and deploy the Striim application

Create an application to create the persisted stream that must be referenced in the Striim Agent for Db2 for z/OS configuration file. Creating a persisted stream also creates a topic of the same name in Kafka.

The following example TQL creates the application using the admin.MTLSCloudKafkaProperties property set, which is pre-configured with the mTLS certificates required for secure communication with the internal Kafka cluster. You can view the values in this property set by navigating to Manage Striim > Property Sets and selecting MTLSCloudKafkaProperties.

Before configuring the TQL, determine the <id> value for the UploadedFiles path by navigating to Manage Striim > Property Sets and inspecting the MTLSCloudKafkaProperties property set. The <id> appears in the keystore and truststore file paths.

USE ns1;

CREATE APPLICATION Db2zOS_CDC;

CREATE STREAM Db2zOSStream OF WAEvent

PERSIST USING admin.MTLSCloudKafkaProperties;

CREATE EXTERNAL SOURCE Db2zOSReader (

connector: 'db2',

dataFormat: 'AvroExternalDb2zOS',

schemaRegistry: 'https://schema-registry.kafka.svc.cluster.local:8081',

SchemaRegistryConfig: 'ssl.keystore.location=/opt/striim/UploadedFiles/<id>/kafka.client.keystore.jks,

ssl.keystore.password=<password>,

ssl.key.password=<password>,

ssl.truststore.location=/opt/striim/UploadedFiles/<id>/kafka.client.truststore.jks,

ssl.truststore.password=<password>'

)

OUTPUT TO Db2zOSStream;

END APPLICATION Db2zOS_CDC;

11. Populate the Striim Agent for Db2 for z/OS configuration file

When configuring Striim Agent for Db2 for z/OS for Striim Cloud, use the Kafka Bootstrap Server address and Schema Registry URL from the Striim Cloud console. Set KAFKA_USESSL to true and specify the paths to the mTLS certificate files downloaded in step 14.

The setup script you will run in the next step requires a configuration file with the following contents. In this context, localhost is the system running Striim Agent for Db2 for z/OS.

If you create multiple Striim Agent for Db2 for z/OS pipelines, each needs a separate configuration file. The SOURCE_NAME, TARGET_NAME, and TOPIC must be different for each pipeline. TABLE_REGEX will typically be different and SCHEMA_REGEX may be different. The other properties typically will be the same.

Property name | Description | Syntax | Example(s) | notes |

|---|---|---|---|---|

LIBRDKAFKA_PATH | path to the Kafka shared object library for Linux | absolute file path to Kafka library (librdkafka.so) | /usr/lib/x86_64-linux-gnu/librdkafka.so.1 |

|

LIBCURL_PATH | path to the curl shared object library for Linux | absolute file path to CURL library (libcurl.so) | /usr/lib/x86_64-linux-gnu/libcurl.so.4 |

|

LICENSE_PATH | path to the Striim Agent for Db2 for z/OS license file | absolute file path to Striim Agent for Db2 for z/OS license file | /home/ubuntu/License.tcVLC | file will be provided by Striim Support |

REPO | database type of the Striim Agent for Db2 for z/OS repository host | ORCL for Oracle Database or PSQL for PostgreSQL | PSQL ORCL | |

REPO_HOST | Striim Agent for Db2 for z/OS repository database hostname | IP Address or hostname | localhost 192.168.0.5 | |

REPO_PORT | Striim Agent for Db2 for z/OS repository database port | port number | 1521 5432 | |

REPO_DB | Striim Agent for Db2 for z/OS repository database name | database name | ORCL postgres | |

REPO_ID | Striim Agent for Db2 for z/OS repository database user id | SQL ID for repository database | admin postgres | |

REPO_SCHEMA | schema to be used exclusively for the Striim Agent for Db2 for z/OS repository | repository database schema name | SA4DB2 | |

DB2_ALIAS | Name of Db2 alias registered on Db2 Client | database alias defined on the Db2 Client | DB2Z | |

DB2_ID | user id of the source Db2 | SQL ID for source Db2 user | IBMUSER | |

DB2_HOST | Db2 host name | DNS host name or IP address | db2host | |

DB2_PORT | Db2 host port | port number | 5045 | |

DB2_DB | Db2 database name | database name | MYDB | |

SOURCE_NAME | name to be set for this new Db2 source on SA4DB2 | user preferred source name | Db2_zOS_Source | must be different for each SA4DB2 pipeline |

SCHEMA_REGEX | schema(s) to read | regular expression for schemas to read | SCHEMA1|SCHEMA2 | Regex documentation will be provided by Striim. To specify multiple schemas, separate with | (pipe) characters. |

TABLE_REGEX | tables to read | regular expression for tables to read | ^.*$ TABLE1|TABLE2 | Regex documentation will be provided by Striim.

To specify multiple tables, separate with | (pipe) characters. |

db2compdict | include Db2 compression dictionaries (Y or N) | Boolean Y/N | Y N | |

TARGET_NAME | name to be set for the target on SA4DB2 | user-specified target name | zOS_Kafka | must be different for each SA4DB2 pipeline |

KAFKA_BROKER | hostname and port for the Kafka broker | host name or IP with port separated by colon (:) | localhost:9092 | In Striim Cloud, use the Kafka Bootstrap Server address from the Kafka for Stream Persistence tab. |

TOPIC | name of the Kafka topic / persisted stream | namespace, underscore, name of the Kafka topic / persisted stream | using the sample TQL above, the value would be | |

SCHEMA_REGISTRY | hostname and port for the Confluent Schema Registry | host name or IP with port separated by colon (:) | localhost:8081 | In Striim Cloud, use the Schema Registry URL from the Kafka for Stream Persistence tab. |

KAFKA_USESSL | true / false | true to use SSL, otherwise false | true | Required. Set to |

KAFKA_SSLCLIENTCERT | path to client certificate | Path to the | ||

KAFKA_SSLCERT | path to CA certificate | Path to the | ||

KAFKA_SSLKEY | fully qualified name of SSL key file | Path to the | ||

KAFKA_SSLKEYPWD | SSL key password | If no password is required, specify a placeholder value. | ||

PROCESS_NAME | name of the SA4DB2 process to be set for CDC process | user-specified pipeline/process name | zOS_CDC | |

RBA_LRSN | LRSN or RBA value to start reading from in the Db2 log | hex string of the LRSN or RBA value | 000000000000FA3E4376 | Required only when specifying a specific start point of CDC pipeline. If not specified, reading will start with new data, that is, from the end of the log |

Example configuration file

Example configuration file with PostgreSQL repository, to capture change data from all tables in MYSCHEMA1 and MYSCHEMA2, starting with new data (that is, from the latest index, since RBA_LRSN is not specified):

# ----------------------------- # Library Paths # ----------------------------- LIBRDKAFKA_PATH=/usr/lib/x86_64-linux-gnu/librdkafka.so.1 LIBCURL_PATH=/usr/lib/x86_64-linux-gnu/libcurl.so.4 LICENSE_PATH=/home/ubuntu/License.tcVLC # ----------------------------- # Repository Configuration # ----------------------------- REPO=PSQL REPO_HOST=192.0.2.1 REPO_PORT=5432 REPO_DB=postgres REPO_ID=postgres REPO_SCHEMA=sa4db2 # ----------------------------- # Source (DB2) Configuration # ----------------------------- DB2_ALIAS=DB2Z # Used with DB2_connect DB2_HOST=192.0.2.2 # Used with DRDA DB2_PORT=5045 # Used with DRDA DB2_DB=DALLASD # Used with DRDA DB2_ID=IBMUSER SCHEMA_REGEX=IBMUSER TABLE_REGEX=^.*$ db2compdict=N # ----------------------------- # Target (Kafka) Configuration # ----------------------------- KAFKA_BROKER=192.0.2.3:9092 TOPIC=ns1_Db2zOSStream SCHEMA_REGISTRY=192.0.2.4:8081 KAFKA_USESSL=true KAFKA_SSLCLIENTCERT=/path/to/client.crt KAFKA_SSLCERT=/path/to/ca.crt KAFKA_SSLKEY=/path/to/client.ke KAFKA_SSLKEYPWD=password # ----------------------------- # Process Configuration # ----------------------------- SOURCE_NAME=Db2zOS TARGET_NAME=StriimKafka PROCESS_NAME=zOS_CDC

12. Run the Striim Agent for Db2 for z/OS setup script

Prerequisites

After you purchase Db2 for z/OS Support, Striim Support will provide you with a Striim Agent for Db2 for z/OS installation bundle, which includes the Striim Agent for Db2 for z/OS distribution, a sample configuration file, and a set of scripts for installation and other tasks.

Striim Support will also provide you with a Striim Agent for Db2 for z/OS license file, the path to which must be specified in the configuration file.

All properties in he Striim Agent for Db2 for z/OS configuration file must be specified as described in the previous step.

If the Striim Agent for Db2 for z/OS repository will be hosted on PostgreSQL, the

psqlcommand-line terminal must be available on the Striim Agent for Db2 for z/OS host.If the Striim Agent for Db2 for z/OS repository will be hosted on Oracle, the

sqlpluscommand-line terminal must be available on the Striim Agent for Db2 for z/OS host.

To install and configure Striim Agent for Db2 for z/OS, initialize the Striim Agent for Db2 for z/OS repository database, create a Striim Agent for Db2 for z/OS pipeline to send data from Db2 to the Striim application's Kafka-persisted stream (external source), start the Striim Agent for Db2 for z/OS, start the pipeline, extract the installation bundle on the Striim Agent for Db2 for z/OS host system, and run the following full command:

setupDb2.sh -a full -s <db2 password> -r <Striim Agent for Db2 for z/OS repository password> -p <path to configuration file>

Alternatively, you may run the following install command to install and configure Striim Agent for Db2 for z/OS and initialize the Striim Agent for Db2 for z/OS repository database without creating a pipeline:

setupDb2.sh -a install -r <Striim Agent for Db2 for z/OS repository password> -p <path to configuration file>

Then use the create command to create one or more pipelines. Each pipeline must have a different configuration file and write to a different Kafka-persisted stream (external source) in Striim.

setupDb2.sh -a create -s <db2 password> -r <Striim Agent for Db2 for z/OS repository password> -p <path to configuration file>

Then use the start command to run the pipeline.

setupDb2.sh -a start -p <path to configuration file>

Once a Striim Agent for Db2 for z/OS pipeline is running you may start the corresponding Striim application.

Note

In the event of a disaster recovery failover, you must reconfigure the Striim Agent for Db2 for z/OS pipeline with the new static IP addresses for Kafka and Schema Registry in the configuration file, then re-run the setup script.

13. Allow-list the Striim Agent for Db2 for z/OS

The IP address of the machine running Striim Agent for Db2 for z/OS must be allow-listed in the Striim Cloud console so that the agent can publish CDC events to the Kafka cluster. If you specified the agent's IP address during Kafka provisioning in step 6, this step is already complete.

To update the allowed IP addresses after initial provisioning:

In the Striim Cloud console, go to the Kafka for Stream Persistence tab.

Under Data Source Expansion, click Edit next to the displayed Allowed Source IP Addresses.

Add or remove IP addresses as needed. Separate multiple IP addresses with commas.

Save the changes.

14. Download and install mTLS certificates

Striim Cloud uses mTLS (mutual TLS) certificates to secure communication between Striim Agent for Db2 for z/OS and the Kafka cluster. You must download the certificate files from the Striim Cloud console and configure Striim Agent for Db2 for z/OS to use them.

In the Striim Cloud console, go to the Kafka for Stream Persistence tab.

Under Data Source Expansion, locate the Client Keys and Certificates section.

Click Regenerate & Download to download the certificate bundle. Extract the ZIP file to obtain three files:

ca.crt,client.crt, andclient.key.Copy these files to the machine running Striim Agent for Db2 for z/OS.

In the Striim Agent for Db2 for z/OS configuration file, set the

KAFKA_SSLCLIENTCERT,KAFKA_SSLCERT,KAFKA_SSLKEY, andKAFKA_SSLKEYPWDproperties to the paths and password for the downloaded certificate files.

Note

The CA certificate (ca.crt) can only be downloaded during initial generation. If you need the previously generated CA certificate, contact Striim support to obtain a replacement. The client certificate and key files can be regenerated and downloaded at any time.

Note

When a certificate expires, download the renewed certificates using Regenerate & Download and update them on the Striim Agent for Db2 for z/OS host.

Enabling SSL / TLS between Db2 and Striim Agent for Db2 for z/OS

See Configuring connections under the IBM Data Server Driver for JDBC and SQLJ to use TLS.